With nearly every aspect of our daily lives having something to do with technology, it stands to reason that society is largely trusting of its tech. However, this isn’t exactly true. In fact, the exact opposite is more likely, with a recent study concluding that approximately 86% of individuals no longer trust Big Tech companies. Our assessment? This comes from the unfortunately high levels of security vulnerabilities, hacks sustained by even the largest tech companies paired with a track record of lacking transparency regarding these issues.

And to be honest, as an industry-leading iPhone app developer in New York City, we’ve gotten many questions from our clients regarding risks and vulnerabilities to their existing apps and technology stack.

So, what gives? Well, there are a number of reasons for this decline in trust. For one, a large part of society is having a hard time grappling with the rapid progression of technology. During the age of digital transformation, many individuals feel as though their tech has become too smart with too much overreach into their personal lives, leading to a reduced sense of digital privacy. Unfortunately, these individuals are not wrong, and it’s clear that something needs to be done about it.

As a top mobile app development company, we thought it would be useful to discuss some of these technologies that possess these security risks as it is our duty to stay ahead in the fight to keep our app development practices safe for our clients. In addition, we will briefly explore how a zero-trust approach can preserve a sense of confidentiality, integrity, and availability (CIA) during the era of smart technology.

The Era of 5G

Nowadays, most of us know 5G as that “ultra-fast” cellular connectivity symbol that reduces lag when we’re using our smartphones. Did you know, however, that 5G is being used for more than just browsing Amazon or streaming shows on Netflix?

Much like we use 5G for faster speeds and advanced connectivity, businesses and enterprises are using 5G to achieve seamless interoperability between their network devices. In fact, 5G networks are projected to cover over one-third of the world by 2025. Needless to say, 5G is starting to make its way into the realm of cybersecurity due to the countless security benefits it presents.

However, with these benefits come twice as many concerns. More specifically, as connectivity increases throughout the world with 5G, hackers are being presented with the opportunity to access more sensitive data than ever before. Of course, if implemented correctly 5G should increase the protection of this data, but we still have a long way to go before 5G is considered foolproof.

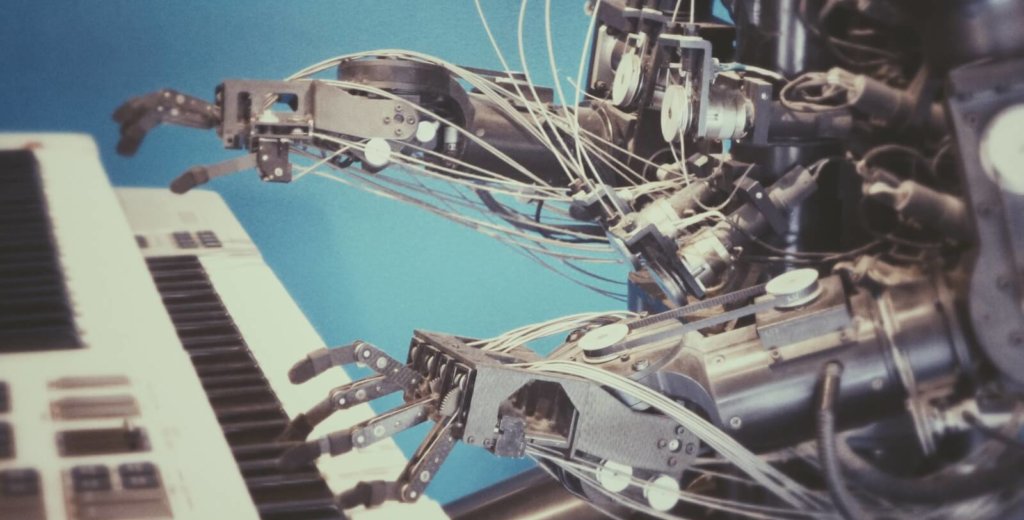

Artificial Intelligence

Artificial Intelligence (AI), much like 5G, is another technology that has seen rapid progression over the past few years. From eCommerce to sports analytics, AI has expanded into virtually every industry, and cybersecurity is no exception to this expansion. In fact, AI is being used by cybersecurity professionals to reinforce conventional cybersecurity tactics, ultimately minimizing the potential attack surface.

Unfortunately, cybercriminals are also able to take advantage of these same AI systems for malicious purposes. So, as AI continues to expand, there is an increasing need for the regulation of it. Without multiple levels of governance over the use of AI, we fear that smart technology will continue to be used unethically to steal sensitive information.

Deepfake Technology

Unlike 5G and AI, deepfake technology doesn’t have a positive benefit for cybersecurity. In fact, authenticity is becoming the next big dilemma in the age of data manipulation, and deepfake technology is at the forefront of this movement.

What is deepfake technology? Well, deepfakes use a form of AI called deep learning to create fake events with the intent of passing them off as real. In essence, the authenticity of recorded events and people is compromised with this tech. Hence, there’s no denying that deepfake technology is a growing threat in the realm of cybersecurity.

To combat this technology, cybersecurity professionals are beginning to use AI-integrated programs to detect when manipulation has occurred. Unfortunately, deepfake technology is showing no signs of abating, making the challenge of determining authenticity a herculean task for future cybersecurity professionals.

Should We Be Skeptical of These Technologies?

Well, yes and no. As we talked about previously, both 5G and AI are being used to strengthen current cybersecurity platforms and tactics, so we shouldn’t shun them entirely. However, because these technologies also have the potential to be used maliciously, it’s fair to say that we should have a healthy skepticism with regards to 5G and AI.

What do we mean by this? A good example of healthy skepticism is zero trust security. Programmed with the assumption that no device connected to a network can be trusted, zero trust security helps to drastically reduce the potential for unauthorized access. By integrating a zero trust security model, we believe that a sense of confidentiality, integrity, and availability (CIA) can be maintained even when smart technology like deep learning is being used by attackers.