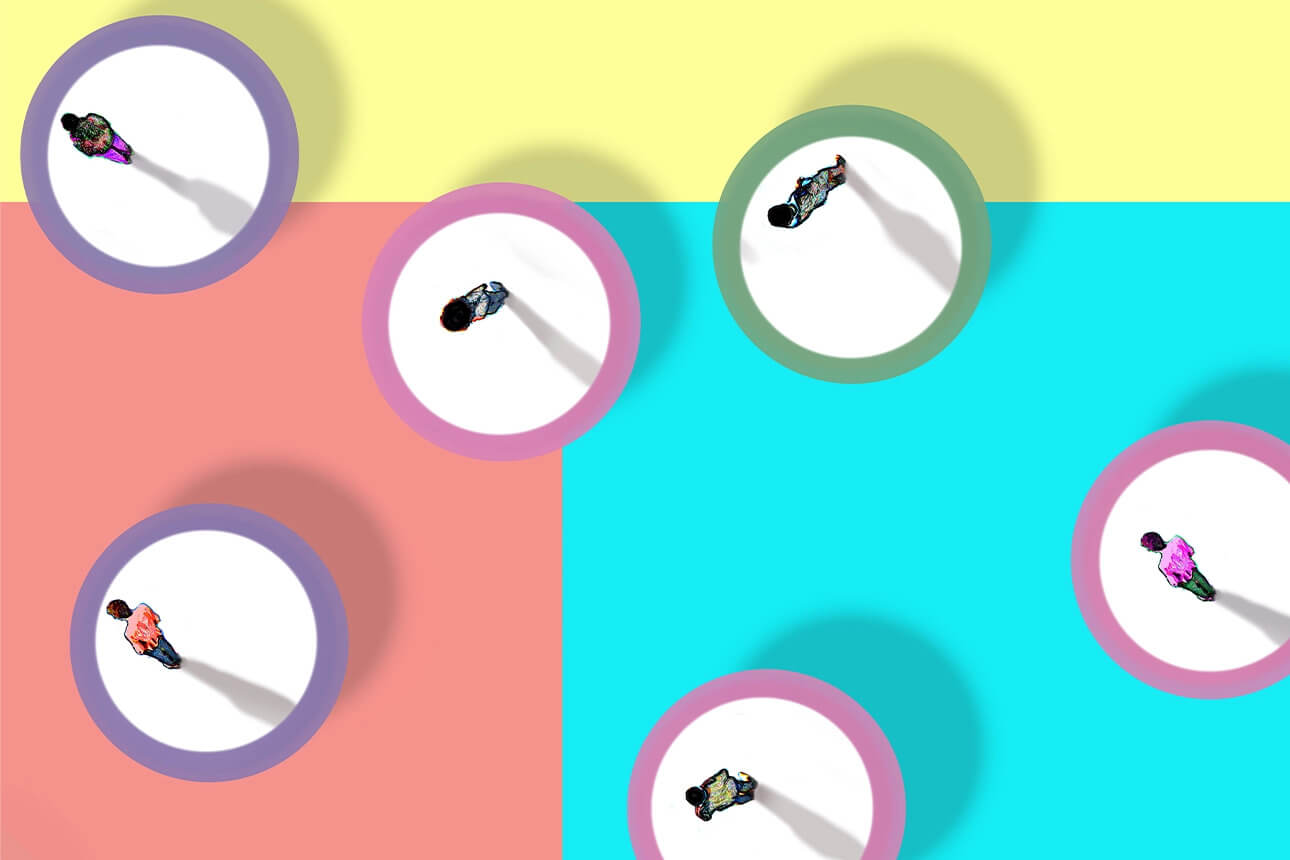

All of the quarantining at home during the pandemic has made many of us feel lonely and isolated, and phone calls and video chats are no substitute for in-person interaction. New artificial intelligence (AI) technology has become available for people who are looking for comfort and connection. This technology, similar to chatbots, offers the ability to chat with an AI for therapy and mental health.

All of the quarantining at home during the pandemic has made many of us feel lonely and isolated, and phone calls and video chats are no substitute for in-person interaction. New artificial intelligence (AI) technology has become available for people who are looking for comfort and connection. This technology, similar to chatbots, offers the ability to chat with an AI for therapy and mental health.

Therapeutic bots have improved their users’ mental health and lent an ear to people in need for decades. Psychiatrists are now interested in studying how these AI applications improve mental health during and after the pandemic.

How Does AI Therapy Work?

AI technology is used to program tools that can perform tasks that humans do, like image recognition or natural language processing. With AI chatbots, a person could theoretically interact with an AI that is indistinguishable from a human. Although most AI chatbots haven’t reached that level of nativity, proficiency, and fluency, they are better now than they ever have been before.

The first chatbot was invented in 1966 by Joseph Weizenbaum, a computer scientist who programmed a chatbot named ELIZA to resemble mental health practitioners. Specifically, Weizenbaum created a chatbot that followed the Rogerian approach to psychotherapy, which includes asking patients open-ended questions, mirroring patients’ phrases, and encouraging elaboration.

For the first chatbot ever invented, ELIZA was a monumental success with its users. Test subjects confided in ELIZA as if it was a human therapist. Many people thought they were talking to an actual human, and some refused to accept that ELIZA was a computer program. ELIZA was attentive, encouraging, and non-judgemental.

But the secret to ELIZA’s success is asking questions and retaining details to bring up again later. And this foundation has led to the creation of enterprise-level chatbots that often take on customer support roles. ELIZA’s framework has inspired computer scientists and machine learning developers to further explore and push the boundaries on AI improving mental health. These days, many of the largest therapy bots have reached millions of users worldwide, and they are often invaluable during a time of sociopolitical uncertainty in a patient’s life.

Tailoring AI to Your Preferences

It’s no surprise, then, that AI mental health chatbots have exploded in popularity since the pandemic began. San Francisco-based Replika is one such app that offers customizable lifelike avatars that users rave about. Replika has seen a 35% increase in traffic since the pandemic started, and it’s no wonder: mental health facilities often have lengthy waitlists requiring a wait time of several weeks, and millions of people need better access to mental health resources.

To make the AI seem more human, it’s designed to remember and mimic certain phrases when a user chats with the AI. For example, the AI is trained so that when it hears the word “depressed”, it responds with an open-ended question about feelings or the reason behind the patient’s emotions. Coders work with writers to determine punctuation, sentence length, and even the addition of emojis. The writers can improve the AI’s responses to flow better and sound more caring and friendly. With these customizations, the chatbot seems like a human with an upbeat attitude.

This type of psychotherapy is really similar to ELIZA and cognitive behavioral therapy where asking questions is of the utmost importance to impart a sense of sympathy and curiosity. AI therapy bots these days will have you vent your frustrations, reflect on your day, and try out some breathing exercises.

AI’s Hard Work

AI is working hard to improve our mental health, but does it actually work? After we interact with AI mental health chatbots, do we feel less anxious or less lonely? Several studies have proven that the technology does provide promising results.

Young adults who interacted with a therapy chatbot frequently were surveyed, and many said that they felt less anxious and lonely than peers surveyed who did not use the chatbot. Another population that would benefit from an AI therapy bot is elderly people, especially if they live alone or don’t have constant contact with their loved ones.

But studies also show that a chatbot is only as good as its script. Because a chatbot can be in constant communication with multiple users at a time, it can be saying the same things over and over again in a day. And this type of plentiful data is excellent for further training and fine-tuning an AI’s performance. For a chatbot to be successful, it must pull out all sorts of answers from users and continuously learn from them. When a user interacts with a chatbot, they want quick answers, transparency, and a judgment-free conversation. An AI might hear information from someone that’s intimate or confidential that the patient’s family or friends may not even know.

It’s because of these scripts that AI cannot currently be seen as a serious replacement for human therapists. AI chatbots are prone to mistakes in understanding, and that can drive a user away from the platform for life. For example, when the popular Woebot app was given input about the user being anxious and not able to sleep, it responded with “Ah, I can’t wait to hop into my jammies later” with “z” emojis. For users who are feeling suicidal, depressed, or on the verge of an anxiety attack, that type of response is off-putting and discomforting.

As a result, AI chatbots aren’t ready yet to handle patients with suicidal thoughts or in life-or-death situations. When AI chatbots become better at contextualizing social behaviors and intervening in a crisis, we can consider them for more serious mental health solutions. Whereas a trained therapist might have a protocol or tried-and-true method for ensuring their patients’ safety, chatbots aren’t at that level yet.

The Future of Communication

Although chatbots have improvements to make, they are edging closer to becoming human-like than ever. Therapy chatbot apps like Replika, Tess, and Woebot becoming more popular and securing more funding, we have new options to help mitigate our loneliness and mental health issues. A digital friend might just be what the doctor ordered.