The model simulates how a human’s brain processes information using neurons and synapses (our own “hardware”) instead of computer CPUs. Intrigued? Read on, it gets even more interesting.

Quantum Computing

Using the inherent quantum properties of cobalt atoms, a team of researchers from the Radboud University in the Netherlands created organized networks of atom spin states. With these networks, a quantum brain was developed that can process information and save it to its memory. This is no longer “artificial” intelligence; it’s the closest computer model we have to a real human brain and how it works.

It’s well-known that machine learning applications are made up of algorithms that take up a lot of energy and require a lot of data. And although Google, Apple, and Amazon have enormous data centers to overcome that limitation, it’s not a realistic situation for the hundreds of smaller AI research firms and institutions. Experts also worry that computing power may be reaching its peak, despite what Moore’s Law says about the rate of advancement of technology.

This new computing method is a promising alternative to overcome these limitations. And, according to the lead author, Dr. Alexander Khajetoorians, the new method “could be the basis for a future solution for applications in AI.”

Integrating Neuroscience

Many AI methods, like deep learning, are already modeled loosely after the human brain. But our current computing technology is limited by the fact that memory and computing units are separated from each other, creating a time, energy, and resource issue when data has to be shuffled back and forth for complex algorithms that require a lot of training data. Experts are concerned about how far we can optimize AI algorithms for efficiency with our current computing technology.

In contrast, the cobalt method allows us to store and compute in one unit. It forgoes CPUs, memory, and chips, allowing for faster computation and memory retrieval as well as less energy consumption. The cobalt method is also extremely flexible: if the algorithm learns that a new factor makes it perform better, it has the capacity to store this updated information in relation to the original for faster retrieval next time. This is incredibly similar to the brain, and it could be the future of computing technology.

Cobalt’s Quantum Spin States

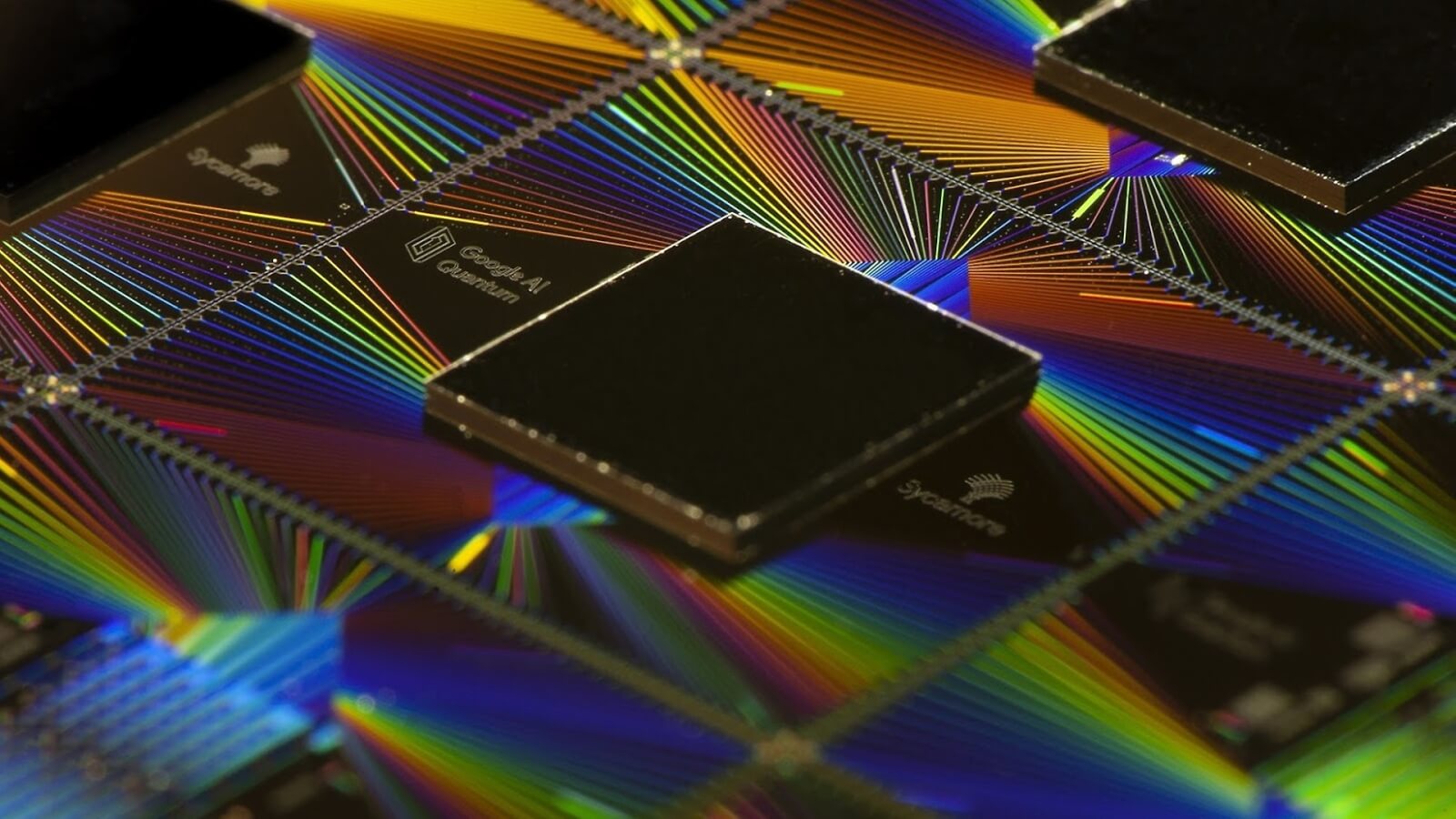

The Radboud University research team has been working on this problem for years. In 2018, the group found out that a single cobalt atom could possibly unlock a computing model closer to neurons and our brains. They found out that they could use several properties of quantum spin states to make this a reality. For example, an atom can have multiple spin states at the same time, and the atom will have a certain probability that it’s in one of each state. That’s similar to how neurons decide to fire and how synapses pass on data.

Another property they dived into was quantum coupling, which involves two atoms binding together in a way that the quantum spin state of one atom influences the other to change. This is also similar to how neurons communicate.

With these two insights, the team worked on building a computing method that was modeled after neurons and synapses. They added multiple cobalt atoms onto a superconducting surface made of black phosphorus. Then they took on the challenge of figuring out if they could induce networking and firing between the cobalt atoms. They wanted to know if they could simulate a neuron firing. They investigated if it was possible to embed information in the atom’s spin states.

After working out a “yes” to those questions, the team used weak currents to send the system 0s and 1s, which could be translated into probabilities of the atoms encoding 0 or 1. Then, the team charged the atoms with a small voltage to simulate how our neurons receive electrical signals before they act (or don’t act). The result was surprising and significant. The voltage caused the atoms to behave in two different ways: it caused them to “fire” and send information to the next atom, and it changed their structure slightly afterward as we see with synapses.

Khajetoorians said, “When stimulating the material over a longer period of time with a certain voltage, we were very surprised to see that the synapses actually changed. The material adapted its reaction based on the external stimuli that it received. It learned by itself.”

A New Kind of Future

Our current computing hardware requires the dangerous mining of rare elements and materials, and using cobalt quantum states offers more ease, affordability, and efficiency. But it will still be a while before we see this innovation in our data centers and computing models. We will need to prove its ability before we see it being used in San Francisco‘s Silicon Valley.

The team must still figure out how to seamlessly scale the system and demonstrate its usage with a real algorithm. We’ll also need to develop a machine for this new technology. Although there’s still a lot of work to be done, Khajetoorians is excited for the future of his research. After all, his team may be the foundation of AI’s new future.